As a content designer at Public Health England (PHE), I’m often asked to support digital projects. Typically, content designers join our projects in the beta and live phases of development.

For our evaluation of digital public health project we tried something new.

We wanted to know what would happen if we brought content design into the earlier, alpha phase, and what difference content design input could make to the project's success.

In the past, projects have failed their alpha service assessment because assessors felt that not enough attention had been paid to content.

With that experience in mind, I joined the project’s multidisciplinary team and I’d like to share what we’ve learned.

About the project

Our project helps teams working on digital health interventions to design evaluation strategies and measure the project’s success from the start, rather than approaching evaluation as an afterthought.

This work, and the user research that supports it, has brought together academics, healthcare professionals and digital specialists from across the health system. With so many expert perspectives involved, there was a clear need for us to speak a common language.

Definining our mission

Before we even spoke to our users, we first had to make sure that our multidisciplinary team had a shared understanding of the project.

So we took a close look at the project’s mission statement: a concise, one-paragraph pitch that summarises what we hope to achieve.

Our mission statement started out as:

Our digital exemplar will enable Public Health England to support and develop digital public health interventions that can prove their effectiveness and benefit to public health.

But as we workshopped this with our team and advisory group, we began to spot problems with it:

- we’re proud of our project’s place in PHE Digital’s wider digital transformation programme – but was this really relevant to our users?

- ‘effectiveness’ and ‘benefit’ aren’t well defined – what exactly do we mean by them?

- ‘prove’ is a strong word, particularly in the context of evaluations – could this cause trouble for us further down the line?

These were just some of the concerns and queries the team brought to the table. We then worked on new iterations until we reached a consensus.

A few versions later, we had a mission statement we all agreed on:

Enable Public Health England to better demonstrate the impact, cost-effectiveness and benefit of digital health interventions to public health.

Writing with experts

With the team aligned and initial concepts explored, it was time to go deeper.

A central component of the project is an ‘evaluation library’: a selection of different academic, health economic and digital evaluation methods.

The written descriptions of these methods were factually accurate, but were full of language that could lead to real confusion for non-specialists. Other content designers won’t be surprised that we found:

- unexpanded acronyms

- confusing technical terminology

- fragmented descriptions and incomplete sentences

Some of this is easily fixed but some of it requires knowledge from subject matter experts. We therefore planned a series of pair-writing workshops.

I worked with our subject matter experts to find language we agreed on – focusing on refining technical explanations, acronyms and ambiguous phrases. Somewhat unexpectedly, I was impressed by our experts’ willingness to compromise on the level of detail (and jargon) to include. This expert support was also essential to fill in the gaps in my own knowledge, so we knew what not to change.

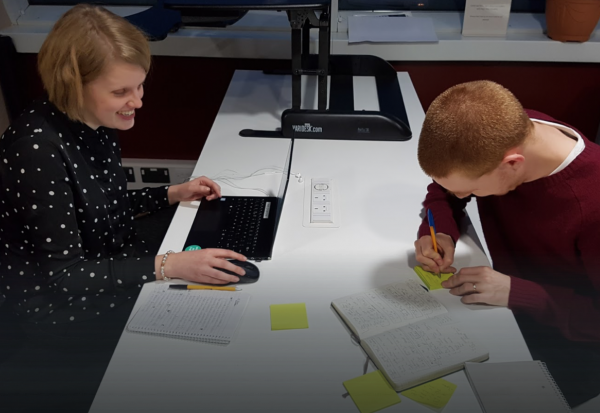

However, there’s a limit to this approach, which relies on a 2-way dialogue between a content designer and a subject matter expert. Working with the project lead, I designed an exercise to help us understand how our wider audience would interpret our content.

Sense-checking exercise

For this sense-checking exercise, we took snippets of content from the project, including definitions of our most important terms, as well as examples of particularly technical or complex language from the evaluation library.

We then spoke with a number of people who could help us test it, including:

- another content designer with no prior knowledge of this project

- a product owner

- a senior academic colleague

In each session, we sat with the participants one-on-one and read them the excerpts. I then asked one simple question: "Did that make sense to you?"

If it did – great. But if not, we found other ways to explain the idea, repeatedly testing different variations until it ‘clicked’. At the same time, another researcher recorded the outcome of the discussion and any relevant comments.

The benefit was 2-fold:

- if our participants consistently agreed that the language was wrong, regardless of their differing backgrounds, then it was clear something had to change

- our expert participants were able to pick up on language that passed unchallenged by non-specialists but was clearly wrong to a specialist audience

Gathering insights

We’ve learned a lot from the content design process, developing insights that will inform the rest of the project. Some of the most important insights are as follows:

- academic language can be intimidating to non-academics, and this can put teams off doing effective evaluations. Where I can’t avoid academic language, I’ll use comparisons and analogies from other fields to make the language easier to understand

- for a public health service, our language and examples were too clinically focused. The team and I need to make sure the examples we use and information we give are relevant to our users

- even common terms like ‘outcome’ and ‘intervention’ have conflicting and overlapping definitions for different user groups. The team and I need to be explicit about our own definitions of these terms and what they mean for the project

- we can strengthen technical or confusing content with in-context definitions and explanations. This is a well-established principle in content design and my research has shown us where the pain points are in our content. Explanations need not be written and many of our users suggested diagrams or animations

What's next?

As the project moves into the beta phase, we are iterating the prototypes and continuing to test with users. As a content designer, I’ll be listening and learning from our users’ experiences to make improvements.

The ultimate aim is an end product that’s accessible, intelligible and easy to use for all its varied audiences.

Find out more about evaluating digital public health